English as the Programming Language of the AI Era

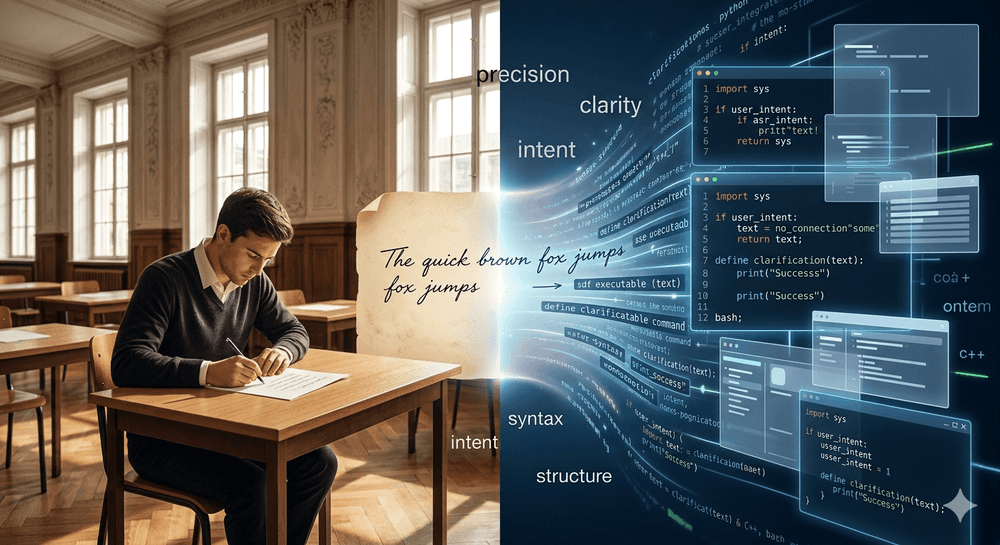

In the last 18 months, a striking idea has moved from a provocative soundbite to a practical reality: natural language—especially English—will increasingly function like a programming language. NVIDIA CEO Jensen Huang has repeatedly framed the shift as a move from syntax-heavy coding to intent-driven instruction, where users “program” computers by expressing what they want in human language. In other words, the competitive edge is no longer only writing code—it is communicating precisely, unambiguously, and persuasively in language that an AI can reliably execute.

That framing has profound implications for education and credentials: if prompting becomes a core workplace skill, then the world will need credible proof of who can truly do it. And that proof will not come from short, lightweight, AI-scored “English tests.” It will come from extensive, high-quality language assessment—the kind that measures active language skills under robust quality controls, such as Cambridge English Qualifications.

1) Why English is becoming “the interface layer” for computing

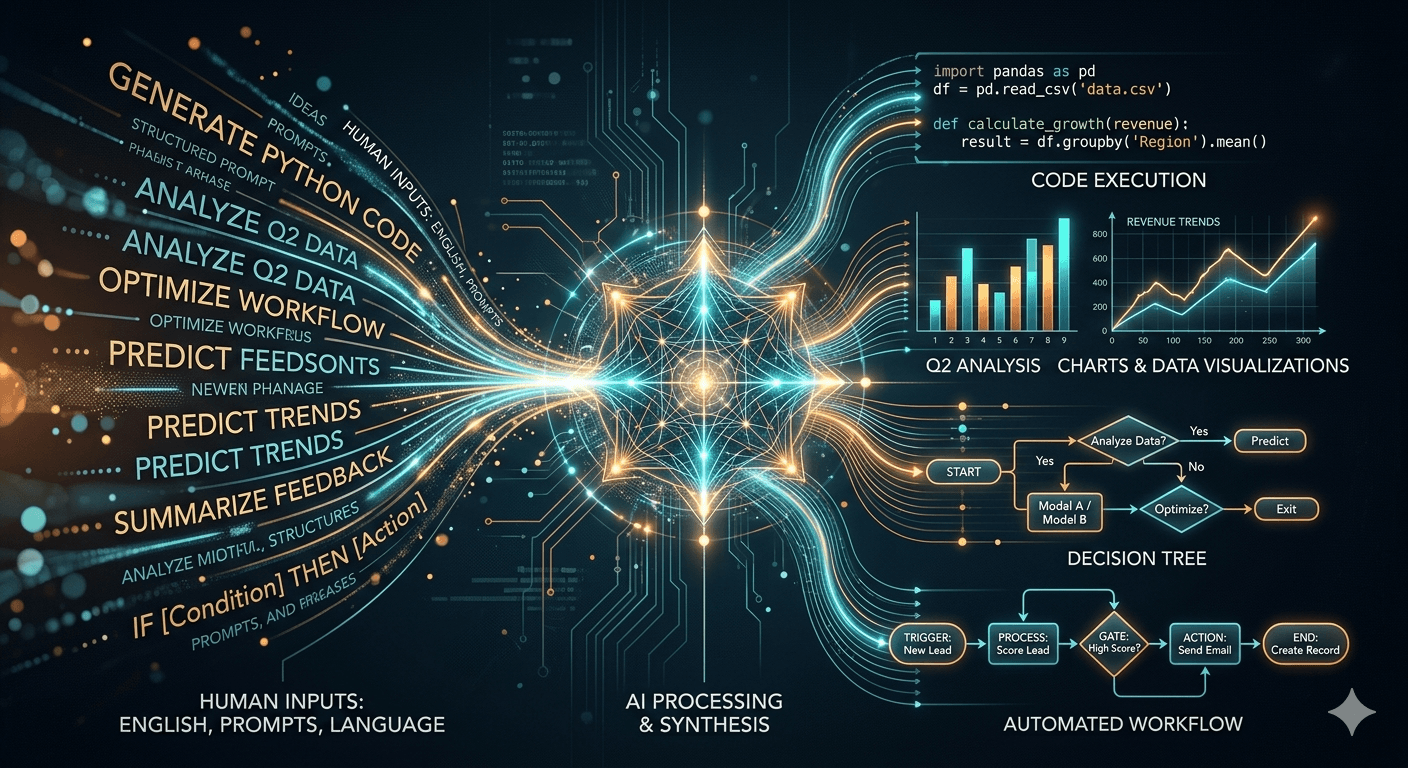

Huang’s argument is not that C++ or Python vanish. Rather, the front door to computing changes: you describe intent in language, and AI translates that intent into working outputs—code, workflows, analyses, decisions. This is already how many teams use generative AI: the user specifies goals, constraints, edge cases, tone, format, and evaluation criteria; the model proposes solutions; the user iterates. That loop is closer to “management by instruction” than traditional coding.

The implication is simple: English fluency is no longer just a soft skill. It becomes part of the technical stack. If you cannot formulate requirements clearly, your “program” (your prompt) fails—just as code fails when requirements are wrong.

And there is a second reason English dominates this interface layer: the modern web—and therefore much of the training substrate for today’s LLMs—is heavily web-derived. Common Crawl in particular is widely documented as a foundational source used in LLM pre-training pipelines, meaning the distribution and biases of web text matter greatly. While LLMs are increasingly multilingual, the overall ecosystem remains strongly shaped by English-heavy web content and English-centric tooling and evaluation traditions.

So when Huang says English can become “the most powerful programming language,” the deeper point is this: the highest-leverage human skill is the ability to express intent in the language the machine best internalizes at scale.

2) Prompt engineering is not “chatting”: it requires high-level productive skills

Prompt engineering, at any serious level, is not about asking a chatbot a question. It is about operationalizing language:

Precision: defining constraints, scope, and acceptance criteria.

Structure: decomposing tasks; sequencing instructions; defining output formats.

Nuance: hedging, prioritizing, and disambiguating meaning (e.g., “optimize for accuracy over brevity”).

Argumentation: justifying trade-offs; evaluating alternatives; defending decisions.

Revision: iteratively refining based on model output and error patterns.

Those are productive (active) language abilities—especially writing and speaking, not merely reading/listening. And productive skills are exactly where superficial testing fails most often.

If English is becoming the “programming layer,” then advanced writing is the new source code—and advanced interaction is the new debugging. Huang’s broader theme—moving from “writing syntax” to “expressing intent” and iterating conversationally—makes the quality of the human’s language output decisive.

3) Why “short, AI-driven English tests” are structurally insufficient

Many modern “quick tests” promise fast results using short samples. But language ability—especially at higher CEFR levels—cannot be credibly inferred from tiny evidence. The reason is not philosophical; it is measurement science:

You can’t measure complex performance without enough performance. A language test is an inference: from observed samples to a broad ability claim. Validity requires adequate evidence aligned to the claim. Short tests, by design, limit the diversity and depth of evidence.

Productive skills require extended output. Speaking and writing are where candidates demonstrate discourse control, cohesion, register, argumentation, and interaction management. Cambridge’s own speaking assessment criteria illustrate how multi-dimensional real proficiency is (e.g., interactive communication, discourse management, lexical/grammatical resource). You cannot capture these dimensions reliably in a 2–5 minute exchange or a paragraph.

AI scoring is not a magic shortcut for validity. AI can help with marking, but it does not remove the need for: (a) high-quality tasks, (b) sufficient sampling, (c) careful validation and ongoing quality monitoring. Modern validation frameworks emphasize that validity is an ongoing, evidence-based argument, not a one-time claim.

In short: AI can accelerate parts of assessment. It cannot replace the foundational requirement: enough high-quality evidence of real performance.

4) What “proof” looks like: why extensive assessments matter

High-stakes users—employers, universities, professional bodies—need a credential that is defensible. That means:

tasks that resemble real-world language use,

enough time and breadth for candidates to demonstrate ability,

scoring that is consistent, fair, and monitored,

and a research-driven commitment to validity and reliability.

Cambridge English explicitly frames its quality philosophy around validity (“authentic test of real-life English”) and reliability (“consistent and fair”), and publishes principles and supporting materials to make these claims transparent. This is exactly the sort of infrastructure that makes a language credential usable as “proof” rather than just a quick indicator.

Crucially for the AI era, Cambridge exams give candidates room to demonstrate productive competence—particularly in speaking, where criteria capture interaction, coherence, and control, and in writing, where extended responses allow evidence of organization, argumentation, and register.

And that matters because prompt engineering competence is closer to extended written production (specifying constraints, formats, evaluation criteria) and interactive clarification (iterating, diagnosing misunderstandings) than to passive comprehension.

5) “English as code” raises the bar—so the credential must raise the standard

Here is the uncomfortable truth: if English becomes the programming layer, then mediocre English becomes a technical debt.

Ambiguous phrasing produces ambiguous outputs.

Weak vocabulary reduces specificity.

Poor discourse control breaks multi-step instruction.

Limited pragmatic competence increases risk in professional contexts (compliance, HR, customer messaging).

And as the stakes rise, credentials that over-claim based on under-sampling will become liabilities.

That is why “short AI-driven English exams” are the wrong answer to the right question. They may be convenient for low-stakes screening, but they do not provide the quantity and quality of evidence needed to certify someone’s ability to use English as a precise operational tool—i.e., as a “programming language.”

6) The strategic conclusion: the AI economy will demand language credentials with depth

Jensen Huang’s vision points to a massive expansion of who can “build” with AI—because the barrier shifts from learning syntax to expressing intent. But that democratization comes with a new form of stratification: those who can communicate with high precision will consistently outperform those who cannot.

Therefore, the most future-proof credential is one that measures:

high-level productive competence (speaking & writing),

under rigorous quality standards,

with enough evidence to justify a high-stakes claim.

That is precisely the space where extensive, established assessments like Cambridge English Qualifications remain not only relevant, but more essential than ever.

A final way to put it (high conviction)

In the AI era, English is not only a language—it is an interface. And interfaces require reliability. If prompt engineering is the “programming language of the future,” then we must treat language competence like we treat engineering competence: prove it with robust measurement, not with shortcuts.

Written by: Peter Kaithan - Swiss Exams

Article Sources: [cambridgeenglish.org] [cambridge.org] [techrepublic.com] [moneycontrol.com]